|

EMR supports graphics and many programming languages. These are web pages where you can write code. EMR also can host Zeppelin and Jupyter notebooks.This lets you use SQL, which is a lot shorter and simpler, to run MapReduce operations. So, Amazon EMR typically deploys Apache Pig with EMR. Though EMR was developed primarily for the MapReduce and Hadoop use case, there are other areas where EMR can be useful: You could then feed the new reduced data set into a reporting system or a predictive model etc. That’s because Hadoop and Spark can scale without limit-it can spread the load across servers. This idea and approach can scale without limit. If that does not seem too exciting, remember that Hadoop and Spark lets you run these operations on something large and messy, like records from your SAP transactional inventory system. The reduce step would then sum each of these tuples yielding these word counts: (James, 3) MapReduce would then create this set of tuples (pairs): (James, 1) To illustrate, let’s say you have these sentences: James hit the ball. (Summing 1 is the same as counting, yes?) So, if a set of text contains wordX 10 times then the wrestling (wordX,10) counts the occurrence of that word. The map step creates this tuple (wordX, 1) then sums the numbers 1. The WordCount program does both map and reduce. (Hello World is the usually the first and simplest introduction to any programming language.) To illustrate what this means, the Hello World programming example for MapReduce is usually the WordCount program. Reduce means to count, sum, or otherwise create a subset of that now reduced data. It means to run some function or some collection of data. The concept of map is common to most programming languages. That’s probably why EMR has both products. You might say that MapReduce is a little bit old-fashioned, since Apache Spark does the same thing as that Hadoop-centric approach, but in a more efficient way. These run MapReduce operations and then optionally save the results to an Apache Hadoop Distributed File System (HDFS). Apache MapReduce is both a programming paradigm and a set of Java SDKs, in particular these two Java classes: Elastic refers to Elastic Cluster, better known as EC2. The name EMR is an amalgamation for Elastic and MapReduce.

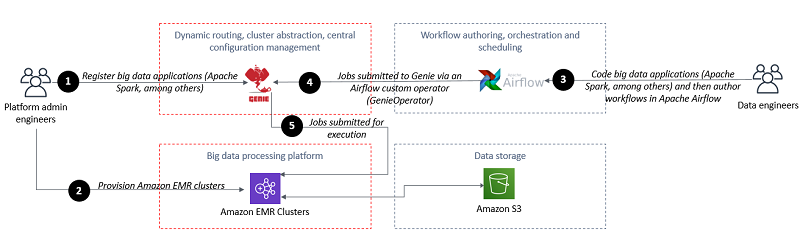

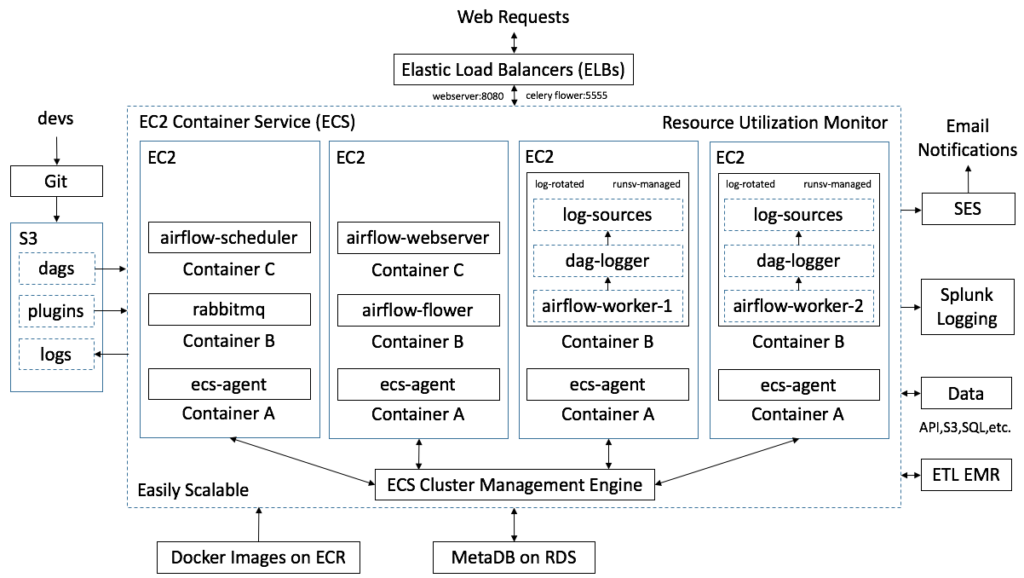

You can see that it installs some of the products that normally you use with Spark and Hadoop, like: What goes into EMR? Here is the configuration wizard for EMR. But it might cost less to have Airflow install all of that and then tear it down than the alternative: you leaving idle hardware running, especially if all of that is running at Amazon, plus perhaps paying a big data engineer to write and debug scripts to do all of this some other way. All the products it installs are open source. The point here is that you don’t need Airflow. So, it might be better to use Airflow than the alternative: typing spark-submit into the command line and hoping for the best. Provides a feedback loop in case any of that goes wrong.Lets you bundle jar files, Python code, and configuration data into metadata.Plus, it’s simpler to use Airflow and its companion product Genie (developed by Netflix) to do things like run jobs using spark-submit or Hadoop queues, which have a lot of configuration options and an understanding of things like Yarn, a resource manager. Optional surge capacity (which, of course, is one element of cost).Benefits of Airflowįor most use cases, there’s two main advantages of Airflow running on an Apache Hadoop and Spark environment: That’s important because your EMR clusters could get quite expensive if you leave them running when they are not in use. Amazon products including EMR, Redshift (data warehouse), S3 (file storage), and Glacier (long term data archival)Īirflow can also start and takedown Amazon EMR clusters.

What is Apache Airflow?Īpache Airflow is a tool for defining and running jobs-i.e., a big data pipeline-on:

We’ll take a look at MapReduce later in this tutorial. That’s the original use case for EMR: MapReduce and Hadoop. What is Amazon EMR?Īmazon EMR is an orchestration tool to create a Spark or Hadoop big data cluster and run it on Amazon virtual machines. In this introductory article, I explore Amazon EMR and how it works with Apache Airflow.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed